* In the mid-20th century, researchers became increasingly aware of the effects of human activities on the environment. This has led to ever-growing efforts to understand and control this impact, with one of the most prominent aspects being "environmental chemistry" -- the study of the normal chemical interactions of the Earth's environment and how human activities affect them.

* All heavenly bodies have an environment of sorts, but they're not necessarily very complex: our airless Moon is characterized by vacuum, rock, and dirt. The Earth, in contrast, has a very complicated environment that we still don't understand in adequate detail. The Earth is a rocky planet with a diameter of about 12,750 kilometers, with a mass of about 6 x 10^21 tonnes. Its elemental composition is as follows:

__________________

iron: 34.6%

oxygen: 29.5%

silicon: 15.2%

magnesium: 12.7%

nickel 2.4%

sulfur 1.9%

titanium 0.05%

__________________

The Earth has a layered arrangement, with a thin external crust and a number of deeper layers down to a molten iron core. The crust is divided into about a dozen "tectonic plates", which are rigid in themselves but can move relative to each other, driven from one side by upwellings of rocky materials from the "mid-ocean ridges" of undersea volcanoes, and pushed slowly back down into the Earth at the other side into "oceanic trenches". This phenomenon is known as "continental drift".

About 70.8% of the surface is covered with water, mostly in the Earth's salt-water oceans, Only about 3% of the Earth's water is fresh, with about two-thirds of that locked up in polar icecaps, and the remaining third in fresh-water lakes and rivers.

Current evidence from radioactive dating points to the Earth being about 4.5 billion years old. In the beginning, the Earth's atmosphere was nothing like it is today, being heavily loaded with carbon dioxide and nitrogen. There was no free oxygen to speak of: oxygen of course is reactive and tends to form carbonate minerals, such as limestone (calcium carbonate / CaCO3) or magnesium carbonate (MgCO3), which eliminate it from the air. It wasn't until organisms arose that could produce oxygen, 2.2 billion or more years ago, that oxygen began to appear in the Earth's atmosphere. It wasn't until about 700 million years ago that oxygen became a significant component of the atmosphere, resulting in more or less the atmosphere as we know it today.

In the modern era, the primary components of the Earth's atmosphere are:

These figures assume no water vapor, but the water content of the atmosphere can vary over time and place, ranging from near zero to up to 4% in terms of numbers of molecules. The remaining fraction of the atmosphere is a mix of trace gases, measured in parts per million:

The upper layers of the atmosphere have high concentrations of ozone, O3, produced by radiation from space breaking up O2 molecules. The "ozone layer" provides a safety barrier for the Earth's organisms, ozone being opaque to much of the high-energy radiation that sleets down from space.

The Earth's land, sea, and atmosphere exist in a complicated equilibrium, with weather patterns transporting water vapor, particularly from the tropical seas, to become rainfall elsewhere. Erosion and volcanic processes slowly adjust the shape of the land over long aeons, changing the configuration of the seas and influencing climate patterns. The relatively thin layer of organisms that covers the Earth -- the "biosphere" -- both is affected by and affects this shifting equilibrium.

While natural processes do change the Earth's environment over time, as human population climbed up into the billions, human activities have begun to have a major and worrying impact on the operation of the system of the world. The rest of this chapter addresses this issue in more detail.

BACK_TO_TOP* The fact that human activities could have a damaging effect on the environment was long more or less ignored. Deforestation and short-sighted agricultural practices often resulted in the "desertification" of lands once green and fertile. With the arrival of the Industrial Revolution, pollution from factory smokestacks and the production of chemicals became widespread. It wasn't until after World War II that awareness of environmental issues became widespread. In December 1952, unusual weather conditions carpeted London with a smoky fog -- what would be given the name of "smog" -- for the better part of a week, killing thousands of people.

Another one of the roots of modern environmental consciousness was the development of the pesticide DDT during World War II. It was a strategic tool during the conflict, allowing control of mosquitoes that carried malaria and other diseases, and was put to widespread use to protect civilian populations after the war. However, research indicated that DDT tended to become concentrated by biological activity up the food chain, which became a public issue after American biologist Rachel Carson (1907:1964) published her famous book SILENT SPRING in 1962. Although some believe Carson overstated the case against pesticides, the book helped create the modern environmental movement, and from that time, like it or not, the environmental impact of chemicals was an issue that couldn't be ignored.

From the mid-1960s, environmental regulations controlling the emission or dumping of toxic substances were established in industrialized nations. Companies that dumped toxic chemicals were fined, and improved techniques for disposal of public and industrial wastes were developed to prevent contamination of the soil and water. Remediation of wastes focused on incineration or chemical neutralization, or when that wasn't possible, disposal in secure toxic waste dumps.

The issue is not a simple one and work on the matter continues, with "green chemistry" efforts now a prominent component of chemical research, focusing on a number of avenues of investigation:

* The most visible component of work to reduce environmental pollution has been in air pollution control. Motor vehicles are a significant component of air pollution, the problem being traditionally a matter of incomplete combustion. Ideally, a combustion process should produce nontoxic emissions, such as diatomic nitrogen (N2) and CO2, but incomplete combustion produces nasty NO and NO2 (generally called "NOx", and definitely not the same sort of beast as N2O or "laughing gas"), carbon monoxide (CO), and partly burned hydrocarbons. Worse, the effect of sunlight on NOx produces reactive and toxic ozone (O3), a particularly unpleasant component of air pollution:

NO2 --UV--> NO + O O + O2 --> O3

Most modern vehicles have emission control systems. The first line of defense has been to develop engines that feature more efficient combustion, using higher operating temperatures; improved combustion chambers that encourage better mixing of air and fuel; and smarter electronic ignition systems. This has the side benefit of improving fuel efficiency, though at a cost in expense. The second line of defense is to introduce a "catalytic converter" system in the engine exhaust line that converts reactive components of incomplete combustion, such as NOx and CO, to benign emissions such as N2 and CO2 -- though as discussed below, these days CO2 isn't seen as entirely benign.

* Power plants and factories also now feature sophisticated emission control systems. A modern coal-fired powerplant burns reasonably cleanly, but coal is not a particularly clean fuel. It contains minerals that won't burn, ending up as particulate ash, and sulfur, which becomes sulfur dioxide on combustion and will combine with water in the air to form sulfuric acid, producing "acid rain":

2SO2 + O2 --> 2SO3 SO3 + H2O --> H2SO4

The "fly ash" that flies up the flue has to be removed, this being done either by a "baghouse" or an "electrostatic precipitator". A baghouse is conceptually simple, just a set of heavy cloth filters in the form of long tubes open at one end, with the cloth allowing the gases to pass through while capturing the fly ash. Since the fly ash will eventually clog the bags, the airflow is reversed occasionally to force out the ash, which falls down into a hopper for removal. Some baghouses have a shaker system to help dislodge the ash.

An electrostatic precipitator consists of rows of vertical plates, with arrays of fine wires energized to high voltage arranged between the plates. The gas passes up through the gaps between the plates, with the ash particles acquiring an electric charge and sticking to the plates. A "rapper" system knocks the ash loose into a hopper for collection.

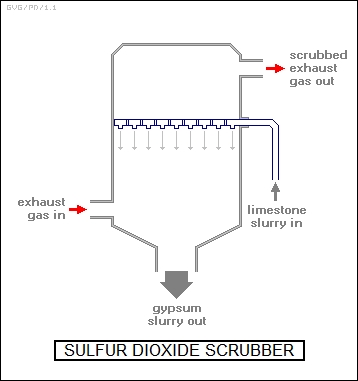

Not surprisingly, neither of these schemes works for sulfur dioxide, a "scrubber" system being used instead, with the scrubber installed "downstream" from the baghouse or electrostatic precipitator system. Scrubbers are tall cylinders into which a slurry of ground-up limestome -- calcium carbonate (CaCO3) -- is sprayed down from the top. The calcium carbonate dissociates into lime (CaO) and carbon dioxide, with the lime combining with the sulfur dioxide to form calcium sulfate, or gypsum (CaSO4*2H2O).

The gypsum is collected in a hopper, and hauled off to a dump; the process produces a substantial amount of gypsum, and disposing of it is troublesome. Not all coal-fired powerplants have scrubbers: some coal has low sulfur content and doesn't need a scrubber. Incidentally, sometimes the stack of a coal-fired power plant will emit a visible white plume. Although news clips often show the plume when discussing powerplant emissions, it's not smoke -- it's steam from the scrubber, the actual emissions are mostly invisible. In cold weather, the plume may appear whether there's a scrubber or not. A number of powerplants also have "selective catalytic reduction" system to help get rid of toxic NOx, with the NOx catalytically reacting with ammonia (NH3) to form nitrogen and water.

BACK_TO_TOP* Environmental problems have a nasty tendency to appear out of nowhere. Early household refrigerators used noxious gases such as sulfur dioxide and ammonia as coolant fluids, with documented cases of families being killed by coolant leaks. In the 1930s, CFCs were introduced as a replacement coolant, and they seemed all but perfect for the job: they were effective, cheap, nonflammable, noncorrosive, and in particular nontoxic. A person can breathe CFCs and suffer no harm, except from oxygen deprivation.

By the 1970s, CFCs were not only in widespread production and use as coolant fluids in refrigerators and air conditioners, they were also used as "blowing agents" to bubble up foam plastic insulation, cleaning agents, and spray propellants. In 1974, however, researchers discovered that CFCs might well be depleting the ozone layer. Once released, the CFCs could migrate to high altitudes and be broken apart by ultraviolet radiation that didn't reach lower altitudes:

CF2Cl2 --UV--> CF2Cl + Cl

The reactive chlorine atoms would then react with ozone in a two-step process to produce oxygen:

Cl + O3 --> ClO + O2 ClO + O --> Cl + O2

The particularly unpleasant thing about this reaction was that it was catalytic: the chlorine was not consumed in the reaction, which meant that a small amount of chlorine could convert a vastly larger amount of ozone into diatomic oxygen. The argument was theoretical at the time, but by the early 1980s, satellite observations were showing a growing region of ozone depletion over the South Pole, with the hole getting bigger and bigger every winter. The matter became a public controversy.

The fuss over CFCs tended to give the public the impression that CFCs are toxic. The reality is that they are very inert, extremely safe in themselves, vastly safer than the noxious coolants they replaced -- the irony being that the problem with them is their very lack of reactivity. Once they escape into the atmosphere, they persist and migrate upward through the stratosphere into the ozone layer, where intense solar ultraviolet breaks them down, which in turn leads to ozone depletion and a potential thinning of the protective ozone layer.

Not everyone agreed that CFCs were a real threat. There was no serious dispute that CFCs could cause ozone depletion, but the trick was that the depletion was strongly enhanced by cold temperatures, which was why the Antarctic ozone hole only appeared in the winter. On that basis, it was possible to argue that ozone depletion by CFCs would not amount to a threat at higher latitudes. However, on the basis of the notion of "better safe than sorry", in 1987 representatives from 43 nations signed the "Montreal Protocols", which mandated the gradual phaseout of CFCs.

CFCs were to be replaced by hydrofluorocarbons (HFCs). HFCs contain no ozone-depleting chlorine, though they were not as efficient refrigerants, and are more expensive. However, HFCs fell out of favor in the next century, as discussed below.

BACK_TO_TOP* Although it seemed in the 1970s and 1980s that the air pollution challenge was being met, in the 1990s worries began to spread that human activities had an effect that promised to be much harder to deal with: global warming, leading to chaotic climate change.

Over the past 3 million years, the world has gone through a sequence of Ice Ages, periods in which glaciers increasingly covered the Earth, to then fade away for a time. In 1896, Svante Arrhenius published a paper in which he suggested that Ice Ages might be linked to atmospheric concentrations of CO2. The Sun pours light down on the Earth, heating it up; the warm Earth then produces infrared radiation, much of which escapes off into space. Atmospheric CO2 tends to "trap" infrared radiation, preventing it from escaping and making the Earth warmer; in modern terms, CO2 is a "greenhouse gas". The trapping effect is proportional to CO2 concentrations, and so low CO2 concentrations might have led to the Ice Ages.

There was concern at the time, and later, that the Earth was headed for another Ice Age, which would undoubtedly have a brutal impact on human population, but in a later book Arrhenius suggested: not to worry. Human industrial emissions of CO2 would be strong enough to prevent the Earth from slipping back into another Ice Age, and the warmer Earth that would result from these high CO2 levels would allow humans to grow more crops to feed an expanding population.

Climate scientists generally believed that Arrhenius was right in principle, but in the period after World War II there was actually a cooling trend. Some climate scientists -- not all, it seems not a majority -- even suggested that a new Ice Age might be imminent, and in fact as late as 1975 the US magazine NEWSWEEK ran a cover article titled "The Cooling World", which predicted that a disastrous Ice Age was then in the making.

However, the midcentury cooling trend, it turned out, was ironically also due to emissions -- of particulate pollutants, which reflected sunlight back into space and help cool the world. Effective pollution control measures dropped the concentration of particulates, and the temperature began to climb again. By the 1990s, climatologists had become increasingly worried about what might happen to the Earth if CO2 concentrations continued their climb, and spoke out about their concerns.

* At that time, there was considerable public skepticism over "anthropocentric global warming (AGW)" -- human-caused climate change -- with the climate research community accused of sloppy research, hysteria, even fraud. It would take about two decades to resolve the dispute.

The foundation of global climate theory is the simple fact, established by thermodynamics, that for a planet to maintain a constant temperature, the amount of energy absorbed from sunlight must be matched by the amount of energy the planet loses to space in the form of infrared thermal radiation, with the intensity of this radiation increasing with temperature. The Earth receives an average of 239 watts of sunshine per square meter; a simple body re-radiating that energy back into space would have an average temperature of -18 degrees Celsius -- about zero Fahrenheit.

Clearly, on the average the Earth is warmer than that, and the reason that is so is because the greenhouse gases, like CO2, in the Earth's atmosphere block the escape of infrared thermal radiation back into space by absorbing it and re-emitting it -- incidentally, in the tenuous upper atmosphere where greenhouse gases are too diffuse to have much of an effect, the average planetary temperature really is about -18 degrees Celsius. Increasing the concentration of greenhouse gases makes it harder for the heat to leak out, with the surface of the Earth and the lower atmosphere heating up. The rise in temperature alters the way the atmosphere transports energy from the warm equator to the cold poles, changing weather patterns.

In any case, there is no doubt that greenhouse warming does take place, since otherwise the Earth would be an icebox. There are four principal greenhouse gases:

Anyone reading this list might have reason to feel puzzled, because it clearly shows that water vapor is the most important greenhouse gas. So why the fuss over CO2? However, the effect of CO2 is not negligible, that effect ranging in possible value from about a tenth to a quarter of the whole -- and there are good reasons to believe CO2 is the "lever" in the system.

One of the characteristics of CO2 is that the "sinks" that draw it out of the atmosphere, most significantly through the photosynthetic operation of plants, operate slowly. That means that a rise in CO2 concentrations can take a long time to fall back down. Water, in contrast, simply falls out of the sky as precipitation, and water vapor concentrations can change very rapidly -- everybody knows that the weather can change from humid to dry overnight. CO2 concentrations don't, can't fluctuate anywhere near that rapidly.

Obviously, water vapor is produced by evaporation, mostly from the seas, and also obviously, an increase in global temperatures means a higher rate of evaporation. That suggests a temperature increase due to a rise in CO2 concentrations could well be amplified by positive feedback from an increase in water vapor concentrations. As noted, the concentrations of CO2 in the atmosphere are small, a fraction of a percent. That means its effectiveness as a greenhouse gas is disproportionately greater than of water vapor on the basis of the total mass of each gas, and so increments in the concentrations of CO2 could have a disproportionate effect.

All that said, water vapor occupies an ambiguous position in the global warming debate. Although it is certainly a greenhouse gas and helps trap heat, as water vapor concentrations rise it also produces more cloud cover -- and, in winter, more snowfields -- reflecting more sunlight back into space. To confuse matters further, clouds also reflect radiation from below, helping trap more heat; in addition, condensation of water droplets is an exothermic process, it releases energy, and so formation of clouds tends to produce local warming. After considerable discussion, the general consensus in the climate research community is that more water vapor means, overall, more warming.

* So what does real-world data show? Measurements made from the 1950s show the level of CO2 rose from 316 parts per million (PPM) in 1959 to 387 PPM in 2009. Indirect measurements suggest the rise began about 1750, starting from the 280 PPM that appears to have been the long-term average for the 10,000 years before that -- though everyone acknowledges that natural CO2 concentrations did tend to vary around that average.

The timing of the rise in CO2 concentration from 1750 tracks the rise in human population and industrialization. It is true that the relative proportion of human emissions of CO2 to natural emissions is small -- but while natural processes have been producing CO2 for a lot longer than humans have been around, they also provide sinks that soak up the CO2, keeping the levels roughly constant. Human activity has provided a persistent increment of CO2 emissions that natural processes can't quite keep up with, a trickle that is gradually leading to an overflow. Estimates suggest that humans produce CO2 in the range of 25 to 30 gigatonnes a year; the rate of growth needed to account for the parts-per-million changes observed is about 15 gigatonnes per year, which is roughly only half the human contribution.

But what is the actual effect of that overflow? It's not entirely clear from the data just how temperature rises with CO2 concentration, or in other words what the "sensitivity" of climate to CO2 concentration really is. Climate is a noisy phenomenon, making it hard to spot and track changes, and the oceans can absorb a good deal of heat, inserting considerable inertia into the system.

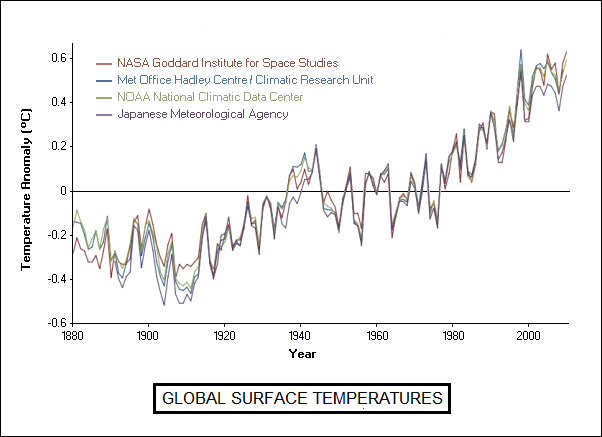

Climate records now available do support warming. There were protests that analyses that showed warming were biased, or suffered from confounding effects in measurements -- but all professional organizations that have analyzed the data, including national weather agencies, have come up with the same results, with all known confounding effects factored into the analyses.

* As far as sensitivity goes, theory backed up by lab studies on the absorption of infrared by CO2 suggests that, on its own, a doubling of CO2 concentration would add 1 degree Celsius to the global temperature. Current projections show that CO2 concentrations will double from the pre-industrial level of 280 PPM to 560 PPM by 2070. Notice the changes involve doubling: it would require raising concentrations to 1,160 PPM to raise temperatures another degree Celsius.

On the face of it, a 1 degree Celsius change hardly seems worth making a fuss about. However, climate is complicated, and increasing CO2 has complicated effects -- particularly in terms of increasing the average concentration of water vapor, with its potential positive and negative feedback effects. The current consensus is that, overall, water vapor provides positive feedback, and that theory says a doubling of CO2 concentration will produce a rise in temperature of 1.7 degrees Celsius.

Everyone also admits that the climate scenarios are complicated and the data is difficult to handle. Researchers are stuck with wading through the bog, and have turned to computer modeling to see if they can make things clear. There are two classes of climate modeling:

EBMs are simpler, but somewhat ad-hoc. GCMs are more difficult to implement, since they involve vast numbers of cells and require considerable computer power; in fact, given current computer hardware, it's out of the question to build a global model with cells approaching, say, a kilometer on a side, since the computing requirements would go through the roof and climb towards the Moon. However, GCMs are regarded as more robust, since they are rooted in basic climate physics.

Both types of models are good enough to accurately simulate features of the real-world climate system, such as monsoons, trade winds, and seasons. They also have the interesting feature that all of them predict global warming -- EBMs less so than GCMs -- and in fact, they tend to predict more warming than could be accounted for by simple theoretical considerations of the effects of CO2 and water vapor, the most extreme prediction being a drastic increase of 4.4 degrees Celsius.

The models also demonstrate that climate sensitivity is dependent on concentration of aerosol particles; the eruption of Mount Pinatubo in the Philippines in 1991 dumped a layer of sunlight-diffusing sulfur particles into the stratosphere, leading to a temporary cooling that fits with the models as well. There is an ambiguity involved -- some aerosol particles are reflective and provide a cooling effect, others such as soot absorb sunlight and provide a heating effect. The models do seem to track the response of climate to aerosol concentrations over the 20th century fairly well; some climate researchers believe that the recent leveling-off of warming may be at least partly due to an unusually high degree of volcanic activity in the corresponding time period.

Incidentally, there is a push to reduce global atmospheric soot concentrations, in particular by replacing inefficient cookstoves in developing countries with new stove designs providing more efficient combustion, the belief being that a dramatic reduction in soot would delay the onset of warming. This effort is aided by the fact that soot is also a health problem, and users would benefit from cleaner-burning stoves anyway.

Data available on what seems to have happened in the prehistoric past also suggest the sensitivity of the models is realistic. During the Ice Ages, the expanded polar icecaps did increase the reflectivity of the Earth, contributing to cooling, but studies show they couldn't have kept the Earth cool on their own. The Earth also had relatively low CO2 concentrations, and factoring in high sensitivity gives a reasonable fit to the known global temperatures. Before the Ice Ages, the Earth had slightly higher CO2 concentrations than today but was a good deal warmer, again suggesting sensitivity.

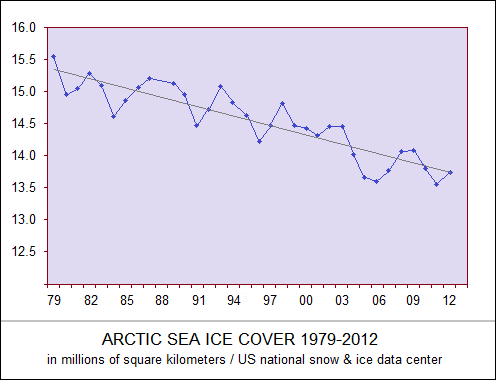

* The argument over AGW began to drop under the radar after 2010, all objections that had been raised by the critics having been addressed. There was no case in the science against it, and events were increasingly bearing out the science -- the rapid melting of the northern icecap and most mountain glaciers, along with rising sea levels, could no longer be honestly disputed. Unseasonably warm winter weather, if not a global phenomenon, became common enough to persuade most of the public that AGW was for real. The dispute lingers, but it is dissipating, the critics having been reduced to nit-picking, recycling thoroughly discredited claims, and generally making useless nuisances of themselves.

An international agreement to restrain climate change was hammered out in Paris in late 2015; the agreement was not seen as enough to address the problem, but it was seen as a significant step towards doing so. Resolution of the issue is dependent on global political will, and the will seems to be there. Indeed, there being no shortage of ideas on how to resolve it, there is reason for confidence that it will be.

Incidentally, AGW put the brakes on the move to replace CFCs with HFCs in refrigeration systems -- since it turned out that HFCs are extremely potent greenhouse gases. Chemists proposed the use of "hydrofluoro-olefins (HFO)" as an alternative, HFOs being neither implicated in ozone depletion nor climate change, though there was considerable controversy over the suggestion, skeptics saying HFOs were an expensive solution that would primarily benefit the chemical companies, and proposing "natural" refrigerants instead: ammonia, propane or other hydrocarbons, high-pressure CO2, even dried air.

Use of ammonia would seem to be going full circle, but ammonia is still used as a refrigerant in industrial applications, where the refrigeration unit can be isolated and not present a health hazard. Its toxicity means that it is unlikely to be returned to general use. In addition, while HFOs are flammable, they are not strongly so, but hydrocarbons like propane tend towards the outright explosive in gaseous form; and though dried air is hard to beat as far as being environmentally benign in itself, it's not highly efficient, meaning more expensive and energy-hungry refrigeration systems. There isn't a simple answer to the problem, and in fact there may be several answers, to be exploited in parallel. Discussions continue.

* Some researchers have suggested that if AGW does become too immediate a threat, we might need to perform "geo-engineering" to cool the planet. The most exotic scheme proposed so far is the idea of placing a constellation of "sunshade" spacecraft at the Earth-Sun Lagrange point -- the location in space where the gravitational force of the Earth and Sun balance, where spacecraft can be kept on station with relatively little effort. Each spacecraft would be about a meter across, using solar-powered thrusters for positioning. The spacecraft would be shot into space using a magnetic launcher; the total mass of the constellation would be about 20 million tonnes.

A second approach takes a hint from nature. As mentioned above, volcanic eruptions can throw particulates into the upper atmosphere that cause a cooling effect; a massive program could be started to inject harmless aerosols into the upper atmosphere to achieve the same effect. Others have suggested the scheme might be used locally, for example to help preserve the polar icecaps.

A third idea involves spraying droplets of seawater into the air to generate low-lying, highly reflective oceanic clouds. This scheme could be implemented by a fleet of unmanned vessels that could generate the sprays using wind power, with each vessel handling 10 kilograms of seawater a second. About 100 vessels would be needed to cool off the Earth, though only 50 would be needed once the climate was stabilized. The fleet could be dispatched to the North Atlantic in the summer to protect the Greenland ice sheets, and transfer to Antarctica six months later. Cooling clouds could be used to lower sea temperatures in tropical areas, and help prevent hurricanes from forming.

Other ideas have included seeding the ocean with nutrients, for example iron, to encourage the growth of photosynthetic plankton that would soak up carbon dioxide; encourage planting of fast-growing trees; setting up networks of CO2 scrubber stations; or to cover deserts with reflective sheets.

There has been great skepticism over geo-engineering schemes, on the basis of their cost, practicality, and effectiveness. Some of the skeptics have opposed the idea on principle, that it encourages people to believe that there is a quick technological fix to the problem of climate change, discouraging efforts to take real action. However, advocates can point out that if matters go from bad to worse too rapidly, the technological fix might be the only thing available to hold off disaster, and so we should at least know what options are available.

BACK_TO_TOP* This document started life as part of a general survey of chemistry, covering history, biochemistry, environmental chemistry, and technology. I ultimately decided it was cumbersome, and so in 2018, I broke it into separate documents, giving this document a new revcode sequence. This initial document is effectively a stub, to be fleshed out when time permits.

I really didn't use many formal sources for this document, instead abstracting it from readings of articles in the scientific and popular press. As concerns copyrights and permissions for this document, all illustrations and images credited to me are public domain. I reserve all rights to my writings. However, if anyone does want to make use of my writings, just contact me, and we can chat about it. I'm lenient in giving permissions, usually on the basis of being properly credited.

* Revision history:

v1.0.0 / 01 may 18 / Split off from INTRODUCTION TO CHEMISTRY. v1.0.1 / 01 apr 20 / Review & polish. v2.0.0 / 01 aug 22 / Restored chapters on biochemistry & environment. v2.1.0 / 01 jul 24 / Rewriting on genomics.BACK_TO_TOP